Connecting It All Together

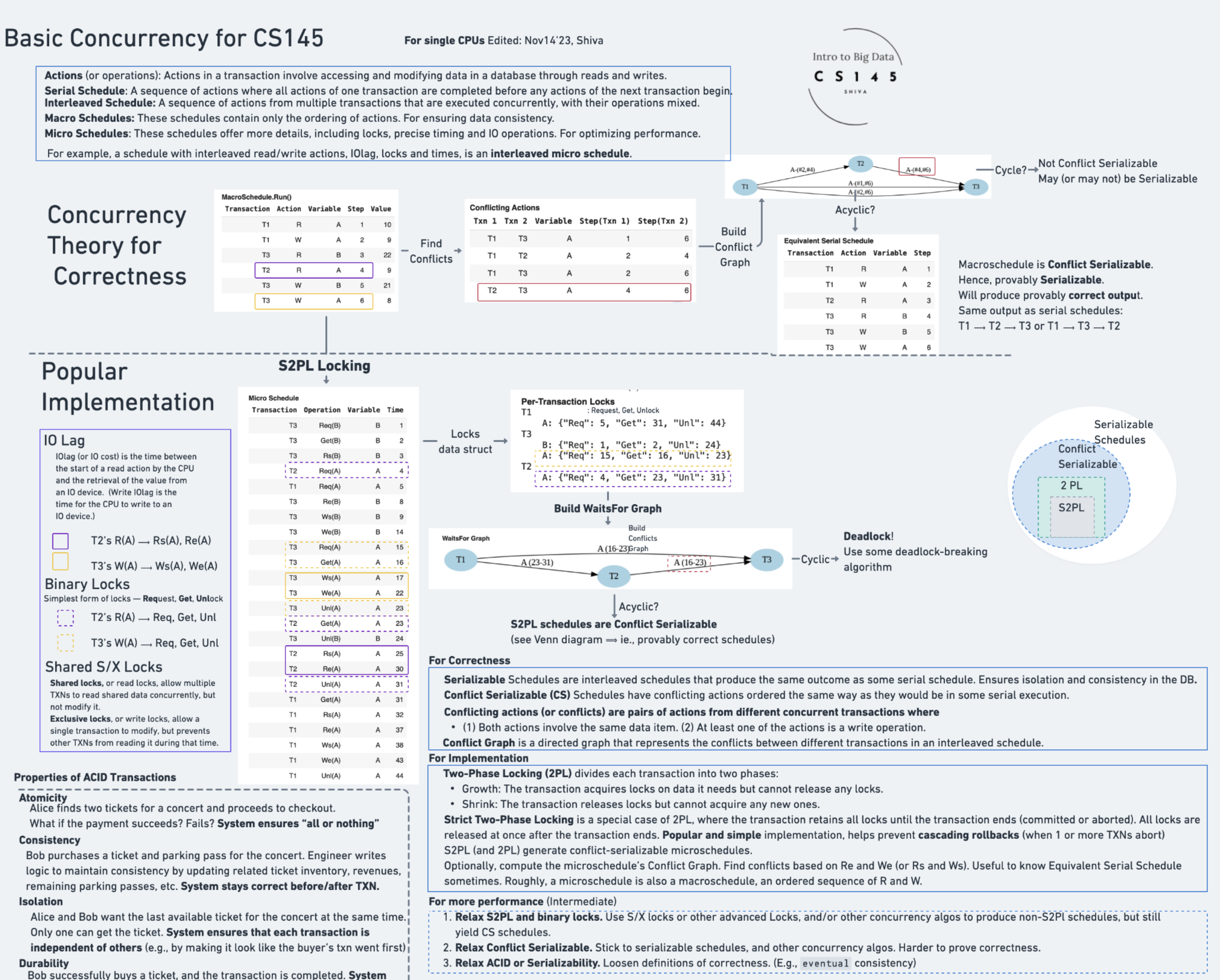

Concept. A complete transaction system layers three things: conflict-serializability (what correctness means), 2PL (the protocol that produces it), and lock granularity tradeoffs (the engineering knob). Together they let thousands of transactions run concurrently while still acting as if each ran alone.

Intuition. When Mickey's playlist update and Minnie's profile edit run at the same time, 2PL serializes them only on the rows they share, the lock manager blocks them on those rows alone (not the whole table), and the resulting schedule is provably equivalent to running them one-after-the-other. All the theory shows up in a single concurrent execution.

Implementation Motivation

We've covered the theory. Now let's connect the dots and see how real transaction systems are built.

The Goal: Implement 2PL locking (or S2PL) to produce conflict-serializable schedules and achieve significant speedups over serial schedules.

Implementation: S2PL in Practice

Sample Code for S2PL

# S2PL Implementation Pattern (Python pseudocode)

class Transaction:

def __init__(self, transaction_id):

self.id = transaction_id

self.locks_held = set()

self.state = "ACTIVE"

def read(self, data_item):

# Acquire shared lock

lock_manager.acquire_lock(self.id, data_item, "SHARED")

self.locks_held.add((data_item, "SHARED"))

# Perform read

value = database.read(data_item)

return value

def write(self, data_item, value):

# Acquire exclusive lock

lock_manager.acquire_lock(self.id, data_item, "EXCLUSIVE")

self.locks_held.add((data_item, "EXCLUSIVE"))

# Perform write

database.write(data_item, value)

def commit(self):

# S2PL: Release all locks at commit

for data_item, lock_type in self.locks_held:

lock_manager.release_lock(self.id, data_item)

self.locks_held.clear()

self.state = "COMMITTED"

def abort(self):

# Rollback changes (not shown)

# Release all locks

for data_item, lock_type in self.locks_held:

lock_manager.release_lock(self.id, data_item)

self.locks_held.clear()

self.state = "ABORTED"

Try it yourself: See the full implementation in the Transactions Colab

The Big Picture

Component Integration

How all the pieces work together:

1. Application Layer: Issues transactions

2. Transaction Manager: Coordinates execution

3. Lock Manager: Enforces 2PL protocol

4. Scheduler: Creates conflict-serializable schedules

5. Recovery Manager: Ensures durability

All working together to provide ACID guarantees!

The performance numbers (48 → 31 time units, ~35 % speedup; 10–100× at scale) come from the worked microschedule example in Microschedules.

The Power of Transaction Managers: Beyond Simple Locks

Transaction managers do more than just handle local locks: they orchestrate complex operations across space and time, at scale.

Distributed Lock Management

2PL works seamlessly across multiple machines and data centers

Example - Global Bank Transfer:

• NY server has account A

• SF server has account B

• Tokyo server has audit logs

Distributed TM coordinates:

• Acquires locks across systems

• Maintains 2PL protocol globally

• Enforces CS guarantees across the network

Intelligent Lock Planning

TMs can plan and schedule locks hours or days in advance

Example - Data Warehouse:

• 6-hour analytics job knows it needs table X at hour 3

• TM pre-reserves locks, optimizes schedule

• Batch jobs coordinate schedules to avoid contention

Smart Scheduling:

• Priority transactions get future lock reservations

• System predicts conflicts, adjusts timing

Extreme Scale Execution

Same 2PL theory applies from 2 to billions of transactions

Where This Runs Today:

• Stock Exchanges: Millions of trades/sec, zero conflicts

• Airlines: 50,000 flights, no double-bookings

• Banking: Trillions moved daily, perfect consistency

Performance at Scale:

• Google Spanner: Global consistent distributed transactions

• AWS Aurora: Fast decoupled storage

Summary: From Theory to Practice

Key Takeaways

Theory Foundation: Conflict-serializability ensures correctness

Implementation: 2PL/S2PL protocols enforce CS schedules

Result: Safe concurrent execution with massive speedups

Next

Recovery: Why We Need It → Concurrency control protects transactions from each other. Recovery protects them from crashes. Together they make ACID real.